- Blog

- T- Shirt Designing Software

- Burn Blu Ray Disc Software

- Windows 7 Anytime Upgrade Download

- Microsoft Office File Formats Documentation

- Download Mount And Blade Mods

- Asus M2n Mx Drivers

- Seagate Barracuda Drivers Windows 10

- Guillermo Maldonado Pdf Books

- Free Esv Audio Bible

- Get Mac Serial Number Without Mac

- Aol 6.0 Free Download

- Spintop Games Escape Rosecliff Island

- Matlab Version 7

- Donkey Kong Country Returns Free

- Acronis 2011 On Windows 10

- New Computers With Windows 7

- Tekken Iso Download

- Software Defect Metrics

- Download G Eazy Album

- Microsoft Office Serial Numbers 2013

- Free Windows Vista 32 Bit

- Flatbush Zombies Album Download

- Remove Write Protection From Usb Software

- Pc Gta Game Superman

- Free Trance Music Downloads Mp3

- Tex Download Windows

- Radmin License Check

- Microsoft Driver Updates For Windows 10

- Pc Game Links Download

- Tera Term Macro Concatenate String

- Datalogic Barcode Scanner Driver Download

- Download Browser For Windows Xp

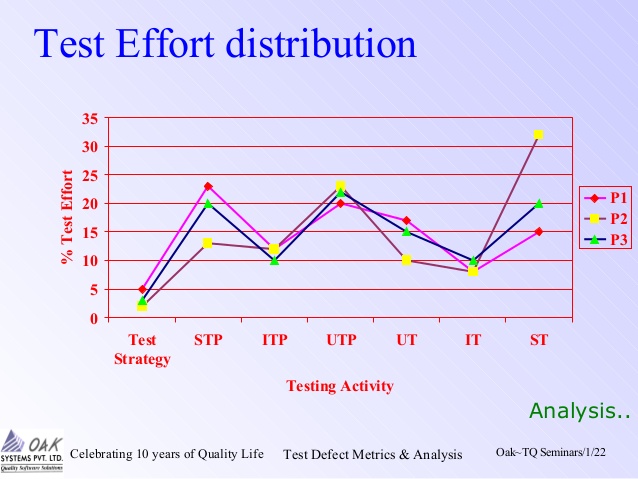

Sep 18, 2019 Software testing metrics - Improves the efficiency and effectiveness of a software testing process. Software testing metrics or software test measurement is the quantitative indication of extent, capacity, dimension, amount or size of some attribute of a process or product. Example for software test measurement: Total number of defects.

- Software Quality Defect Metrics

- Software Defect Metrics Dashboard

- Software Defect Metrics Meaning

- Software Defect Metrics Examples

- Defect density is the number of defects found in the software product per size of the code. Defect Density’ metrics is different from the ‘Count of Defects’ metrics as the latter does not provide management information.

- Defect Density: Another important software testing metrics, defect density helps the team in determining the total number of defects found in a software during a specific period of time- operation or development. The results are then divided by the size of that particular module, which allows the team to decide whether the software is ready for.

| Software development |

|---|

| Core activities |

| Paradigms and models |

| Methodologies and frameworks |

| Supporting disciplines |

| Practices |

| Tools |

| Standards and Bodies of Knowledge |

| Glossaries |

A software metric is a standard of measure of a degree to which a software system or process possesses some property. Even if a metric is not a measurement (metrics are functions, while measurements are the numbers obtained by the application of metrics), often the two terms are used as synonyms. Since quantitative measurements are essential in all sciences, there is a continuous effort by computer science practitioners and theoreticians to bring similar approaches to software development. The goal is obtaining objective, reproducible and quantifiable measurements, which may have numerous valuable applications in schedule and budget planning, cost estimation, quality assurance, testing, software debugging, software performance optimization, and optimal personnel task assignments.

Common software measurements[edit]

Common software measurements include:

- Bugs per line of code

- Comment density[1]

- Cyclomatic complexity (McCabe's complexity)

- Defect density - defects found in a component

- Defect potential - expected number of defects in a particular component

- Defect removal rate

- DSQI (design structure quality index)

- Function Points and Automated Function Points, an Object Management Group standard[2]

Limitations[edit]

As software development is a complex process, with high variance on both methodologies and objectives, it is difficult to define or measure software qualities and quantities and to determine a valid and concurrent measurement metric, especially when making such a prediction prior to the detail design. Another source of difficulty and debate is in determining which metrics matter, and what they mean.[3][4]The practical utility of software measurements has therefore been limited to the following domains:

A specific measurement may target one or more of the above aspects, or the balance between them, for example as an indicator of team motivation or project performance.

Acceptance and public opinion[edit]

Some software development practitioners point out that simplistic measurements can cause more harm than good.[5] Others have noted that metrics have become an integral part of the software development process.[3]Impact of measurement on programmer psychology have raised concerns for harmful effects to performance due to stress, performance anxiety, and attempts to cheat the metrics, while others find it to have positive impact on developers value towards their own work, and prevent them being undervalued.Some argue that the definition of many measurement methodologies are imprecise, and consequently it is often unclear how tools for computing them arrive at a particular result,[6] while others argue that imperfect quantification is better than none (“You can’t control what you can't measure.”).[7]Evidence shows that software metrics are being widely used by government agencies, the US military, NASA,[8] IT consultants, academic institutions,[9] and commercial and academic development estimation software.

See also[edit]

References[edit]

- ^'Descriptive Information (DI) Metric Thresholds'. Land Software Engineering Centre. Archived from the original on 6 July 2011. Retrieved 19 October 2010.

- ^'OMG Adopts Automated Function Point Specification'. Omg.org. 2013-01-17. Retrieved 2013-05-19.

- ^ abBinstock, Andrew. 'Integration Watch: Using metrics effectively'. SD Times. BZ Media. Retrieved 19 October 2010.

- ^Kolawa, Adam. 'When, Why, and How: Code Analysis'. The Code Project. Retrieved 19 October 2010.

- ^Kaner, Dr. Cem, Software Engineer Metrics: What do they measure and how do we know?, CiteSeerX10.1.1.1.2542

- ^Lincke, Rüdiger; Lundberg, Jonas; Löwe, Welf (2008), 'Comparing software metrics tools'(PDF), International Symposium on Software Testing and Analysis 2008, pp. 131–142

- ^DeMarco, Tom. Controlling Software Projects: Management, Measurement and Estimation. ISBN0-13-171711-1.

- ^'NASA Metrics Planning and Reporting Working Group (MPARWG)'. Earthdata.nasa.gov. Archived from the original on 2011-10-22. Retrieved 2013-05-19.

- ^'USC Center for Systems and Software Engineering'. Sunset.usc.edu. Retrieved 2013-05-19.

External links[edit]

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Software_metric&oldid=918231434'

In software projects, it is most important to measure the quality, cost and effectiveness of the project and the processes. Without measuring these, a project can’t be completed successfully.

In today’s article, we will learn with examples and graphs – Software test metrics and measurements and how to use these in software testing process.

There is a famous statement: “We can’t control things which we can’t measure”.

Here controlling the projects means, how a project manager/lead can identify the deviations from the test plan ASAP in order to react in the perfect time. Generation of test metrics based on the project needs is very much important to achieve the quality of the software being tested.

What You Will Learn:

What is Software Testing Metrics?

A Metric is a quantitative measure of the degree to which a system, system component, or process possesses a given attribute.

Metrics can be defined as “STANDARDS OFMEASUREMENT”.

Software Metrics are used to measure the quality of the project. Simply, Metric is a unit used for describing an attribute. Metric is a scale for measurement.

Suppose, in general, “Kilogram” is a metric for measuring the attribute “Weight”. Similarly, in software, “How many issues are found in thousand lines of code?”, here No. of issues is one measurement & No. of lines of code is another measurement. Metric is defined from these two measurements.

Software Quality Defect Metrics

Test metrics example:

- How many defects exist within the module?

- How many test cases are executed per person?

- What is the Test coverage %?

What is Software Test Measurement?

Measurement is the quantitative indication of extent, amount, dimension, capacity, or size of some attribute of a product or process.

Test measurement example: Total number of defects.

Please refer below diagram for a clear understanding of the difference between Measurement & Metrics.

Why Test Metrics?

Generation of Software Test Metrics is the most important responsibility of the Software Test Lead/Manager.

Test Metrics are used to,

- Take the decision for next phase of activities such as, estimate the cost & schedule of future projects.

- Understand the kind of improvement required to success the project

- Take a decision on process or technology to be modified etc.

Importance of Software Testing Metrics:

As explained above, Test Metrics are the most important to measure the quality of the software.

Now, how can we measure the quality of the software by using Metrics?

Suppose, if a project does not have any metrics, then how the quality of the work done by a Test analyst will be measured?

For Example A Test Analyst has to,

- Design the test cases for 5 requirements

- Execute the designed test cases

- Log the defects & need to fail the related test cases

- After the defect is resolved, need to re-test the defect & re-execute the corresponding failed test case.

In above scenario, if metrics are not followed, then the work completed by the test analyst will be subjective i.e. the test report will not have the proper information to know the status of his work/project.

If Metrics are involved in the project, then the exact status of his/her work with proper numbers/data can be published.

I.e. in the Test report, we can publish:

1. How many test cases have been designed per requirement?

2. How many test cases are yet to design?

3. How many test cases are executed?

4. How many test cases are passed/failed/blocked?

5. How many test cases are not yet executed?

6. How many defects are identified & what is the severity of those defects?

7. How many test cases are failed due to one particular defect? etc.

1. How many test cases have been designed per requirement?

2. How many test cases are yet to design?

3. How many test cases are executed?

4. How many test cases are passed/failed/blocked?

5. How many test cases are not yet executed?

6. How many defects are identified & what is the severity of those defects?

7. How many test cases are failed due to one particular defect? etc.

Based on the project needs we can have more metrics than an above mentioned list, to know the status of the project in detail.

Based on the above metrics, test lead/manager will get the understanding of the below mentioned key points.

a) %ge of work completed

b) %ge of work yet to be completed

c) Time to complete the remaining work

d) Whether the project is going as per the schedule or lagging? etc.

a) %ge of work completed

b) %ge of work yet to be completed

c) Time to complete the remaining work

d) Whether the project is going as per the schedule or lagging? etc.

Based on the metrics, if the project is not going to complete as per the schedule, then the manager will raise the alarm to the client and other stakeholders by providing the reasons for lagging to avoid the last minute surprises.

Metrics Life Cycle:

Types of Manual Test Metrics:

Testing Metrics are mainly divided into 2 categories.

- Base Metrics

- Calculated Metrics

Base Metrics:

Base Metrics are the Metrics which are derived from the data gathered by the Test Analyst during the test case development and execution.

Base Metrics are the Metrics which are derived from the data gathered by the Test Analyst during the test case development and execution.

This data will be tracked throughout the Test Lifecycle. I.e. collecting the data like Total no. of test cases developed for a project (or) no. of test cases need to be executed (or) no. of test cases passed/failed/blocked etc.

Calculated Metrics:

Calculated Metrics are derived from the data gathered in Base Metrics. These Metrics are generally tracked by the test lead/manager for Test Reporting purpose.

Calculated Metrics are derived from the data gathered in Base Metrics. These Metrics are generally tracked by the test lead/manager for Test Reporting purpose.

Examples of Software Testing Metrics:

Let’s take an example to calculate various test metrics used in software test reports:

Below is the table format for the data retrieved from the test analyst who is actually involved in testing:

Definitions and Formulas for Calculating Metrics:

#1) %ge Test cases Executed: This metric is used to obtain the execution status of the test cases in terms of %ge.

%ge Test cases Executed = (No. of Test cases executed / Total no. of Test cases written) * 100.

So, from the above data,

%ge Test cases Executed = (65 / 100) * 100 = 65%

%ge Test cases Executed = (65 / 100) * 100 = 65%

#2) %ge Test cases not executed: This metric is used to obtain the pending execution status of the test cases in terms of %ge.

%ge Test cases not executed = (No. of Test cases not executed / Total no. of Test cases written) * 100.

So, from the above data,

%ge Test cases Blocked = (35 / 100) * 100 = 35%

%ge Test cases Blocked = (35 / 100) * 100 = 35%

#3) %ge Test cases Passed: This metric is used to obtain the Pass %ge of the executed test cases.

%ge Test cases Passed = (No. of Test cases Passed / Total no. of Test cases Executed) * 100.

So, from the above data,

%ge Test cases Passed = (30 / 65) * 100 = 46%

%ge Test cases Passed = (30 / 65) * 100 = 46%

#4) %ge Test cases Failed: This metric is used to obtain the Fail %ge of the executed test cases.

%ge Test cases Failed = (No. of Test cases Failed / Total no. of Test cases Executed) * 100.

So, from the above data,

%ge Test cases Passed = (26 / 65) * 100 = 40%

%ge Test cases Passed = (26 / 65) * 100 = 40%

#5) %ge Test cases Blocked: This metric is used to obtain the blocked %ge of the executed test cases. A detailed report can be submitted by specifying the actual reason of blocking the test cases.

%ge Test cases Blocked = (No. of Test cases Blocked / Total no. of Test cases Executed) * 100.

So, from the above data,

%ge Test cases Blocked = (9 / 65) * 100 = 14%

%ge Test cases Blocked = (9 / 65) * 100 = 14%

#6) Defect Density = No. of Defects identified / size

(Here “Size” is considered a requirement. Hence here the Defect Density is calculated as a number of defects identified per requirement. Similarly, Defect Density can be calculated as a number of Defects identified per 100 lines of code [OR] No. of defects identified per module etc.)

So, from the above data,

Defect Density = (30 / 5) = 6

Defect Density = (30 / 5) = 6

#7) Defect Removal Efficiency (DRE) = (No. of Defects found during QA testing / (No. of Defects found during QA testing +No. of Defects found by End-user)) * 100

Software Defect Metrics Dashboard

DRE is used to identify the test effectiveness of the system.

Suppose, During Development & QA testing, we have identified 100 defects.

After the QA testing, during Alpha & Beta testing, end-user / client identified 40 defects, which could have been identified during QA testing phase.

Suppose, During Development & QA testing, we have identified 100 defects.

After the QA testing, during Alpha & Beta testing, end-user / client identified 40 defects, which could have been identified during QA testing phase.

Now, The DRE will be calculated as,

DRE = [100 / (100 + 40)] * 100 = [100 /140] * 100 = 71%

DRE = [100 / (100 + 40)] * 100 = [100 /140] * 100 = 71%

$8) Defect Leakage: Defect Leakage is the Metric which is used to identify the efficiency of the QA testing i.e., how many defects are missed/slipped during the QA testing.

Defect Leakage = (No. of Defects found in UAT / No. of Defects found in QA testing.) * 100

Suppose, During Development & QA testing, we have identified 100 defects.

After the QA testing, during Alpha & Beta testing, end-user / client identified 40 defects, which could have been identified during QA testing phase.

After the QA testing, during Alpha & Beta testing, end-user / client identified 40 defects, which could have been identified during QA testing phase.

Defect Leakage = (40 /100) * 100 = 40%

#9) Defects by Priority: This metric is used to identify the no. of defects identified based on the Severity / Priority of the defect which is used to decide the quality of the software.

%ge Critical Defects = No. of Critical Defects identified / Total no. of Defects identified * 100

From the data available in the above table,

%ge Critical Defects = 6/ 30 * 100 = 20%

From the data available in the above table,

%ge Critical Defects = 6/ 30 * 100 = 20%

%ge High Defects = No. of High Defects identified / Total no. of Defects identified * 100

From the data available in the above table,

%ge High Defects = 10/ 30 * 100 = 33.33%

From the data available in the above table,

%ge High Defects = 10/ 30 * 100 = 33.33%

%ge Medium Defects = No. of Medium Defects identified / Total no. of Defects identified * 100

From the data available in the above table,

%ge Medium Defects = 6/ 30 * 100 = 20%

From the data available in the above table,

%ge Medium Defects = 6/ 30 * 100 = 20%

%ge Low Defects = No. of Low Defects identified / Total no. of Defects identified * 100

From the data available in the above table,

%ge Low Defects = 8/ 30 * 100 = 27%

From the data available in the above table,

%ge Low Defects = 8/ 30 * 100 = 27%

Recommended reading => How to Write an Effective Test Summary Report

Conclusion:

The metrics provided in this article are majorly used for generating the daily/weekly status report with accurate data during test case development/execution phase & this is also useful for tracking the project status & Quality of the software.

Software Defect Metrics Meaning

About the author: This is a guest post by Anuradha K. She is having 7+ years of software testing experience and currently working as a consultant for an MNC. She is also having good knowledge of mobile automation testing.

Software Defect Metrics Examples

Which other test metrics do you use in your project? As usual, let us know your thoughts/queries in comments below.

Recommended Reading

- Blog

- T- Shirt Designing Software

- Burn Blu Ray Disc Software

- Windows 7 Anytime Upgrade Download

- Microsoft Office File Formats Documentation

- Download Mount And Blade Mods

- Asus M2n Mx Drivers

- Seagate Barracuda Drivers Windows 10

- Guillermo Maldonado Pdf Books

- Free Esv Audio Bible

- Get Mac Serial Number Without Mac

- Aol 6.0 Free Download

- Spintop Games Escape Rosecliff Island

- Matlab Version 7

- Donkey Kong Country Returns Free

- Acronis 2011 On Windows 10

- New Computers With Windows 7

- Tekken Iso Download

- Software Defect Metrics

- Download G Eazy Album

- Microsoft Office Serial Numbers 2013

- Free Windows Vista 32 Bit

- Flatbush Zombies Album Download

- Remove Write Protection From Usb Software

- Pc Gta Game Superman

- Free Trance Music Downloads Mp3

- Tex Download Windows

- Radmin License Check

- Microsoft Driver Updates For Windows 10

- Pc Game Links Download

- Tera Term Macro Concatenate String

- Datalogic Barcode Scanner Driver Download

- Download Browser For Windows Xp